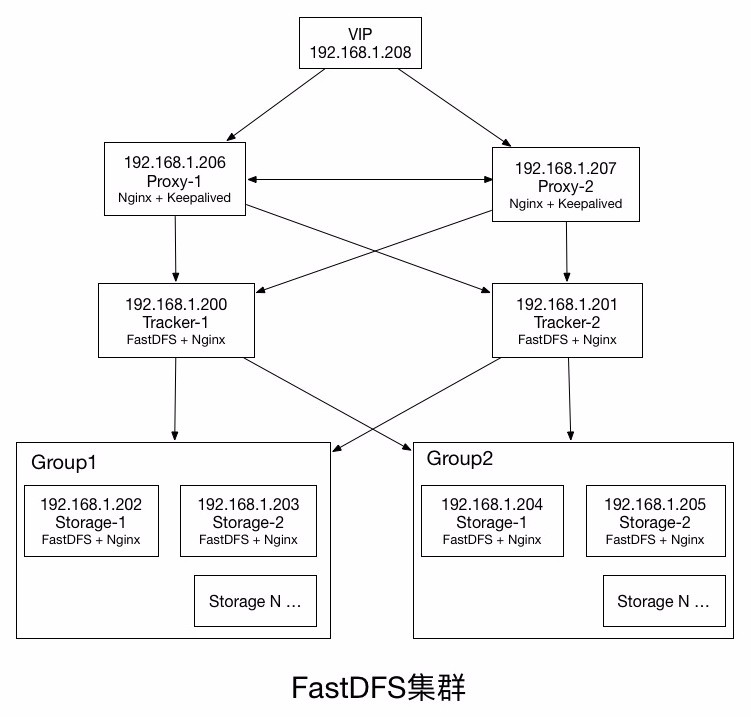

FastDFS集群 应用场景说明

1.FastDFS主要解决了大容量的文件存储和高并发访问的问题,文件存取时实现了负载均衡。

2.FastDFS实现了软件方式的RAID,可以使用廉价的IDE硬盘进行存储 ,支持存储服务器在线扩容。

3.FastDFS特别适合大中型网站使用,用来存储资源文件(如:图片、文档、音频、视频等等。

127.0.0.1 FastDFS 文件存储路径:/var/lib/fast-dfs/storage/path0/data/ web端:ip+端口+文件路径 eg:http://127.0.0.1/zhimegroup1/M00/00/00/CgpvZllI3-6ASBmHAAClaGOUOq8246.jpg http://fastdfs.daily.huha.me/zhimegroup1/M00/00/00/CgpvZllI3-6ASBmHAAClaGOUOq8246.jpg #online环境 版本:V5.11 10.8.81.63 fastdfs-tracker1 10.8.81.64 fastdfs-tracker2 10.8.82.2 fastdfs-storage 文件存储路径:/var/lib/fast-dfs/storage/path0/data/ web端:ip+端口+文件路径 eg:http://10.8.82.2/zhimegroup1/M00/00/00/CghSAllwXVSATP3CAAPbx_ZgX0U535.jpg 注:程序以root用户启动

yum install -y gc gcc gcc-c++ gcc* pcre pcre-devel zlib zlib-devel openssl openssl-devel wget -O libfastcommon.tar.gz https://codeload.github.com/happyfish100/libfastcommon/tar.gz/V1.0.35 && tar xf libfastcommon.tar.gz wget -O fastdfs.tar.gz https://github.com/happyfish100/fastdfs/archive/V5.11.tar.gz && tar xf fastdfs.tar.gz wget http://nginx.org/download/nginx-1.13.9.tar.gz && tar xf nginx-1.13.9.tar.gz wget –O fastdfs-nginx-module.zip https://codeload.github.com/happyfish100/fastdfs-nginx-module/zip/master && unzip fastdfs-nginx-module.zip wget https://ftp.pcre.org/pub/pcre/pcre-8.41.tar.gz && tar xf pcre-8.41.tar.gz cd pcre-8.41 ./configure --prefix=/usr/local/pcre-8.41 --libdir=/usr/local/lib/pcre --includedir=/usr/local/include/pcre && make && make install

解压以上: tar xf ***.tar.gz

cd libfastcommon-1.0.35/ && ./make.sh && ./make.sh install

mv /root/fastdfs-5.11/ /root/fastdfs/ && ./make.sh && ./make.sh install

cd conf

cp http.conf anti-steal.jpg mime.types /etc/fdfs/

mkdir -p /var/lib/fast-dfs/tracker

mkdir -p /var/lib/fast-dfs/storage/{base,path0}

mkdir -p /var/lib/fast-dfs/client

mkdir -p /var/lib/fast-dfs/nginx-module

cp -p /etc/fdfs/tracker.conf.sample /etc/fdfs/tracker.conf

cp -p /etc/fdfs/storage.conf.sample /etc/fdfs/storage.conf

cp -p /etc/fdfs/client.conf.sample /etc/fdfs/client.conf修改配置文件: vi /etc/fdfs/tracker.conf base_path=/var/lib/fast-dfs/tracker store_group=zhimegroup2 vi /etc/fdfs/storage.conf group_name=zhimegroup1 base_path=/var/lib/fast-dfs/storage/base store_path0=/var/lib/fast-dfs/storage/path0 tracker_server=192.168.10.3:22122 # 不能设置:127.0.0.1 vi /etc/fdfs/client.conf base_path=/var/lib/fast-dfs/client tracker_server=192.168.10.3:22122 # 不能设置:127.0.0.1

设置tracker服务开机启动: chkconfig fdfs_trakcerd on 启动: fdfs_trackerd /etc/fdfs/tracker.conf start fdfs_storaged /etc/fdfs/storage.conf start

centos7编译安装nginx: https://abc.htmltoo.com/thread-635.htm

cd /root/nginx-1.12.2

./configure --prefix=/usr/local/nginx --add-module=/root/fastdfs-nginx-module-master/src

make && make install

cp /root/fastdfs-nginx-module-master/src/mod_fastdfs.conf /etc/fdfs/

vi /usr/local/nginx/conf/nginx.conf

server {

listen 80;

server_name localhost;

location ~ /zhimegroup[0-9]/M00 {

ngx_fastdfs_module;

}

}

vi /etc/fdfs/mod_fastdfs.conf

base_path=/var/lib/fast-dfs/nginx-module

tracker_server=192.168.10.3:22122 # 不能设置:127.0.0.1

url_have_group_name = true

store_path0=/var/lib/fast-dfs/storage/path0

group_name=zhimegroup1

fastdfs 添加组

vi /etc/fdfs/mod_fastdfs.conf

--

group_count = 2

[group2]

group_name=zhimegroup2

storage_server_port=24000

store_path_count=1

store_path0=/var/lib/fast-dfs/storage/path2

--

vi /etc/fdfs/storage.conf

--

group_name=zhimegroup2

base_path=/var/lib/fast-dfs/storage/base2

store_path0=/var/lib/fast-dfs/storage/path2

http.server_port=8889

--

mkdir -pv /var/lib/fast-dfs/storage/{base2,path2}

chown -R admin.admin /var/lib/fast-dfs/storage/* # (base2 path2)

#把nginx加入系统变量

echo 'export PATH=$PATH:/usr/local/nginx/sbin'>>/etc/profile && source /etc/profile

#设置开机启动,添加一行:

vi /etc/rc.local

/usr/local/nginx/sbin/nginx

#设置执行权限

# chmod 755 /etc/rc.local

#启动:

nginx # 启动

nginx -s reload # 重新载入配置文件

nginx -s reopen # 重启

nginx -s stop # 停止 Nginx

#防火墙中打开Nginx端口(默认的 80)

vi /etc/sysconfig/iptables - 80 # service iptables restart

vi /usr/local/nginx/conf/nginx.conf

添加如下行,将 /group1/M00 映射到 /ljzsg/fastdfs/file/data

location /group1/M00 {

alias /ljzsg/fastdfs/file/data;

}

nginx -s reload # nginx -s reload

#命令说明:

上传文件:/usr/bin/fdfs_upload_file <config_file> <local_filename>

下载文件:/usr/bin/fdfs_download_file <config_file> <file_id> [local_filename]

删除文件:/usr/bin/fdfs_delete_file <config_file> <file_id>

查看存储节点: /usr/bin/fdfs_monitor /etc/fdfs/client.conf

/usr/bin/fdfs_monitor /etc/fdfs/storage.conf

/usr/bin/fdfs_monitor /etc/fdfs/client.conf

移除某一集群分组节点:/usr/bin/fdfs_monitor /etc/fdfs/client.conf delete group1 192.168.1.106

启动顺序:tracker-->storage-->nginx

关闭:

killall fdfs_trackerd

killall fdfs_storaged

/usr/bin/fdfs_trackerd /etc/fdfs/tracker.conf stop

/usr/bin/fdfs_storaged /etc/fdfs/storage.conf stop

启动:

/usr/bin/fdfs_trackerd /etc/fdfs/tracker.conf start

/usr/bin/fdfs_storaged /etc/fdfs/storage.conf start

重启:

/usr/bin/fdfs_trackerd /etc/fdfs/tracker.conf restart

/usr/bin/fdfs_storaged /etc/fdfs/storage.conf restart

#上传测试

/usr/bin/fdfs_upload_file /etc/fdfs/client.conf /root/logo.png

上传成功后返回文件ID号:group1/M00/00/00/wKgz6lnduTeAMdrcAAEoRmXZPp870.png

返回的文件ID由group、存储目录、两级子目录、fileid、文件后缀名(由客户端指定,主要用于区分文件类型)拼接而成。

http://127.0.0.1/group1/M00/00/00/wKgz6lnduTeAMdrcAAEoRmXZPp870.png

http://fastdfs.daily.huha.me/group1/M00/00/00/wKgz6lnduTeAMdrcAAEoRmXZPp870.png

#查看tracker和storage进程: netstat -unltp|grep fdfs

2个进程: 22122-fdfs_trackerd 23000-fdfs_storaged做多机分布式集群

跟踪服务器负载均衡节点1:192.168.1.206 dfs-nginx-proxy-1

跟踪服务器负载均衡节点2:192.168.1.207 dfs-nginx-proxy-2

跟踪服务器1:192.168.1.200 dfs-tracker-1

跟踪服务器2:192.168.1.201 dfs-tracker-2

存储服务器1:192.168.1.202 dfs-storage-group1-1

存储服务器2:192.168.1.203 dfs-storage-group1-2

存储服务器3:192.168.1.204 dfs-storage-group2-1

存储服务器3:192.168.1.205 dfs-storage-group2-2

HA虚拟IP:192.168.1.208

HA软件:Keepalived

一.单机部署,见上节.

二.说明:每个节点执行相同的操作.

1.配置跟踪节点(192.168.1.200,192.168.1.201)

vi /etc/fdfs/tracker.conf

2.配置存储节点 (group1: 192.168.1.202,192.168.1.203 group2: 192.168.1.204,192.168.1.205)

vi /etc/fdfs/storage.conf

# 修改的内容如下: disabled=false # 启用配置文件 port=23000 # storage服务端口 group_name=group1 # 组名(第一组为group1,第二组为group2,依次类推...) base_path=/fastdfs/storage # 数据和日志文件存储根目录 store_path0=/fastdfs/storage # 第一个存储目录,第二个存储目录起名为:store_path1=xxx,其它存储目录名依次类推... store_path_count=1 # 存储路径个数,需要和store_path个数匹配 tracker_server=192.168.0.200:22122 # tracker服务器IP和端口 tracker_server=192.168.0.201:22122 # tracker服务器IP和端口 http.server_port=8888 # http访问文件的端口

3.文件上传测试

vi /etc/fdfs/client.conf

base_path=/fastdfs/tracker tracker_server=192.168.1.200:22122 tracker_server=192.168.1.201:22122

执行文件上传命令:

/usr/bin/fdfs_upload_file /etc/fdfs/client.conf /usr/local/src/FastDFS_v5.05.tar.gz

返回以下ID号,说明文件上传成功:

group1/M00/00/00/wKgBh1Xtr9-AeTfWAAVFOL7FJU4.tar.gz

group2/M00/00/00/wKgBiVXtsDmAe3kjAAVFOL7FJU4.tar.gz

(从返回的ID号中也可以看出,同一个文件分别存储在两个组内group1和group2,但也有可能在同一组中,

具体策略是由FastDFS根据服务器的存储情况来分配的)

4.存储节点安装Nginx和fastdfs-nginx-module模块。

说明:每个节点执行相同的操作

第一组存储服务器的mod_fastdfs.conf配置:

connect_timeout=10 base_path=/tmp tracker_server=192.168.1.200:22122 tracker_server=192.168.1.201:22122 storage_server_port=23000 group_name=group1 # 第一组storage的组名 url_have_group_name=true store_path0=/fastdfs/storage group_count=2 [group1] group_name=group1 storage_server_port=23000 store_path_count=1 store_path0=/fastdfs/storage [group2] group_name=group2 storage_server_port=23000 store_path_count=1 store_path0=/fastdfs/storage

第二组存储服务器的mod_fastdfs.conf配置:

第二组的mod_fastdfs.confg配置与第一组的配置只有group_name不同

vi /usr/local/nginx/conf/nginx.conf

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

server {

listen 8888;

server_name localhost;

# FastDFS 文件访问配置(fastdfs-nginx-module模块)

location ~/group([0-9])/M00 {

ngx_fastdfs_module;

}注意:

A、8888 端口值要与/etc/fdfs/storage.conf 中的 http.server_port=8888 相对应,因为 http.server_port 默认为 8888,如果想改成 80,则要对应修改过来。

B、Storage 对应有多个 group 的情况下,访问路径带 group 名,如:http://xxxx/group1/M00/00/00/xxx, 对应的 Nginx 配置为:

location ~/group([0-9])/M00 {

ngx_fastdfs_module;

}C、如下载时如发现老报 404,将nginx.conf第一行user nobody;修改为user root;后重新启动。

启动nginx,通过浏览器访问测试时上传的文件

http://192.168.1.202:8888/group1/M00/00/00/wKgBh1Xtr9-AeTfWAAVFOL7FJU4.tar.gz

http://192.168.1.204:8888/group2/M00/00/00/wKgBiVXtsDmAe3kjAAVFOL7FJU4.tar.gz

5.跟踪节点安装Nginx和ngx_cache_purge模块

说明:每个节点执行相同的操作

tracker节点:192.168.1.200,192.168.1.201

在 tracker 上安装的 nginx 主要为了提供 http 访问的反向代理、负载均衡以及缓存服务。

A.安装nginx和ngx_cache_purge模块

cd /usr/local/src tar -zxvf nginx-1.10.0.tar.gz tar -zxvf ngx_cache_purge-2.3.tar.gz cd nginx-1.10.0 ./configure --prefix=/opt/nginx --sbin-path=/usr/bin/nginx --add-module=/usr/local/src/ngx_cache_purge-2.3 make && make install

B.配置Nginx,设置tracker负载均衡以及缓存

vi /usr/local/nginx/conf/nginx.conf

user nobody;

worker_processes 1;

events {

worker_connections 1024;

use epoll;

}

http {

include mime.types;

default_type application/octet-stream;

#log_format main '$remote_addr - $remote_user [$time_local] "$request" '

# '$status $body_bytes_sent "$http_referer" '

# '"$http_user_agent" "$http_x_forwarded_for"';

#access_log logs/access.log main;

sendfile on;

tcp_nopush on;

keepalive_timeout 65;

#gzip on;

#设置缓存

server_names_hash_bucket_size 128;

client_header_buffer_size 32k;

large_client_header_buffers 4 32k;

client_max_body_size 300m;

proxy_redirect off;

proxy_set_header Host $http_host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; proxy_connect_timeout 90;

proxy_send_timeout 90;

proxy_read_timeout 90;

proxy_buffer_size 16k;

proxy_buffers 4 64k;

proxy_busy_buffers_size 128k;

proxy_temp_file_write_size 128k; #设置缓存存储路径、存储方式、分配内存大小、磁盘最大空间、缓存期限

proxy_temp_path /fastdfs/cache/nginx/proxy_cache/tmp;

#设置 group1 的服务器

upstream fdfs_group1 {

server 192.168.1.202:8888 weight=1 max_fails=2 fail_timeout=30s;

server 192.168.1.203:8888 weight=1 max_fails=2 fail_timeout=30s;

}

#设置 group2 的服务器

upstream fdfs_group2 {

server 192.168.1.204:8888 weight=1 max_fails=2 fail_timeout=30s;

server 192.168.1.205:8888 weight=1 max_fails=2 fail_timeout=30s;

}

server {

listen 8000;

server_name localhost;

#charset koi8-r;

#access_log logs/host.access.log main;

#设置 group 的负载均衡参数

location /group1/M00 {

proxy_next_upstream http_502 http_504 error timeout invalid_header;

proxy_cache http-cache;

proxy_cache_valid 200 304 12h;

proxy_cache_key $uri$is_args$args;

proxy_pass http://fdfs_group1;

expires 30d;

}

location /group2/M00 {

proxy_next_upstream http_502 http_504 error timeout invalid_header; proxy_cache http-cache;

proxy_cache_valid 200 304 12h;

proxy_cache_key $uri$is_args$args;

proxy_pass http://fdfs_group2;

expires 30d;

}

#设置清除缓存的访问权限

location ~/purge(/.*) {

allow 127.0.0.1;

allow 192.168.1.0/24;

deny all;

proxy_cache_purge http-cache $1$is_args$args;

}

#error_page 404 /404.html;

# redirect server error pages to the static page /50x.html

#

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

}

}按以上 nginx 配置文件的要求,创建对应的缓存目录:

mkdir -p /fastdfs/cache/nginx/proxy_cache mkdir -p /fastdfs/cache/nginx/proxy_cache/tmp

c.防火墙打开Nginx 8000 端口,启动nginx,z 设置开机启动。

d.文件访问测试:

前面直接通过访问Storage节点中的Nginx访问文件:

http://192.168.1.202:8888/group1/M00/00/00/wKgBh1Xtr9-AeTfWAAVFOL7FJU4.tar.gz

http://192.168.1.204:8888/group2/M00/00/00/wKgBiVXtsDmAe3kjAAVFOL7FJU4.tar.gz

现在可以通过Tracker中的Nginx来进行访问:

(1)、通过 Tracker1 中的 Nginx 来访问

http://192.168.1.200:8000/group1/M00/00/00/wKgBh1Xtr9-AeTfWAAVFOL7FJU4.tar.gz

http://192.168.1.200:8000/group2/M00/00/00/wKgBiVXtsDmAe3kjAAVFOL7FJU4.tar.gz

(2)、通过 Tracker2 中的 Nginx 来访问

http://192.168.1.201:8000/group1/M00/00/00/wKgBh1Xtr9-AeTfWAAVFOL7FJU4.tar.gz

http://192.168.1.201:8000/group2/M00/00/00/wKgBiVXtsDmAe3kjAAVFOL7FJU4.tar.gz

由上面的文件访问效果可以看到,每一个Tracker中的Nginx都单独对后端的Storage组做了负载均衡,

但整套FastDFS集群,如果想对外提供统一的文件访问地址,还需要对两个Tracker中的Nginx进行HA集群。

6.配置Tracker服务器高可用、反向代理与负载均衡:

使用Keepalived + Nginx组成的高可用负载均衡集群,做两个Tracker节点中Nginx的负载均衡。

1> 安装keepalived与Nginx

分别在192.168.1.206和192.168.1.207两个节点安装Keepalived与Nginx。

keepalived安装与配置:https://abc.htmltoo.com/thread-636.htm

Nginx的安装与配置:见上节

2> 配置Keeyalived + Nginx高可用

请参考《Keepalived+Nginx实现高可用(HA)》: https://abc.htmltoo.com/thread-637.htm

注意:将VIP的IP地址修改为192.168.1.208

3> 配置nginx对tracker节点的负载均衡

2个节点的Nginx配置相同,如下所示:

vi /usr/local/nginx/conf/nginx.conf

user root;

worker_processes 1;

events {

worker_connections 1024;

use epool;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

## FastDFS Tracker Proxy

upstream fastdfs_tracker {

server 192.168.1.200:8000 weight=1 max_fails=2 fail_timeout=30s;

server 192.168.1.201:8000 weight=1 max_fails=2 fail_timeout=30s;

}

server {

listen 80;

server_name localhost;

location / {

root html;

index index.html index.htm;

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

## FastDFS Proxy

location /dfs {

root html;

index index.html index.htm;

proxy_pass http://fastdfs_tracker/;

proxy_set_header Host $http_host;

proxy_set_header Cookie $http_cookie;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

client_max_body_size 300m;

}

}

}4> 重启192.168.1.206 和 192.168.1.207 中的Nginx: nginx -s reload

5> 通过虚拟IP访问文件测试:

现在可以通过 Keepalived+Nginx 组成的高可用负载集群的 VIP(192.168.1.208)来访问 FastDFS 集群中的文件了:

http://192.168.1.208/dfs/group1/M00/00/00/wKgBh1Xtr9-AeTfWAAVFOL7FJU4.tar.gz

http://192.168.1.208/dfs/group2/M00/00/00/wKgBiVXtsDmAe3kjAAVFOL7FJU4.tar.gz

注意:千万不要使用 kill -9 命令强杀 FastDFS 进程,否则可能会导致 binlog 数据丢失。